INTRODUCTION

Is it possible to fall in love with a mathematical object? If the object in question is the Mandelbrot set, then the answer is a definite yes. This post talks about an iPad app that helps us explore the strange, hypnotic and never-ending beauty of this well-known fractal.

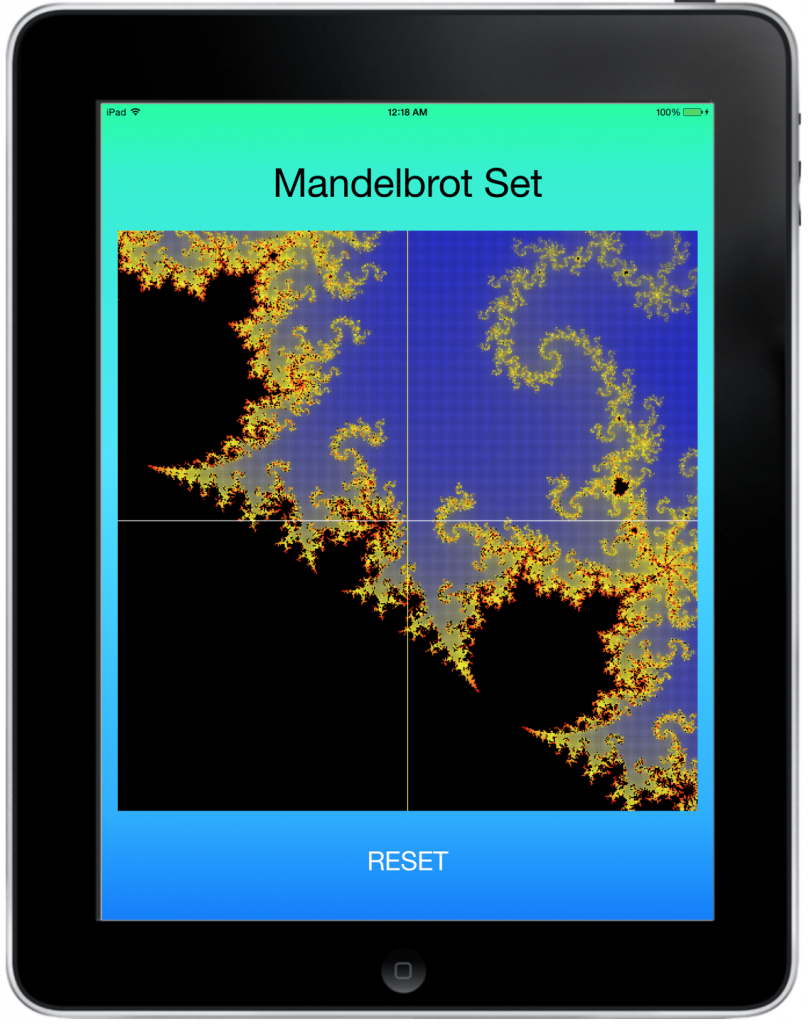

The image above shows a zoomed-in view of a certain part of the Mandelbrot set. The points in black are inside the set and those in blue are outside the set. Much of the artistic appeal of the set depends on the color scheme you design to mark the points at the borderline. I used the exact same color scheme from my C++/OpenGL tutorial and a lot of code (in the Model) was directly ported from C++ to Objective-C.

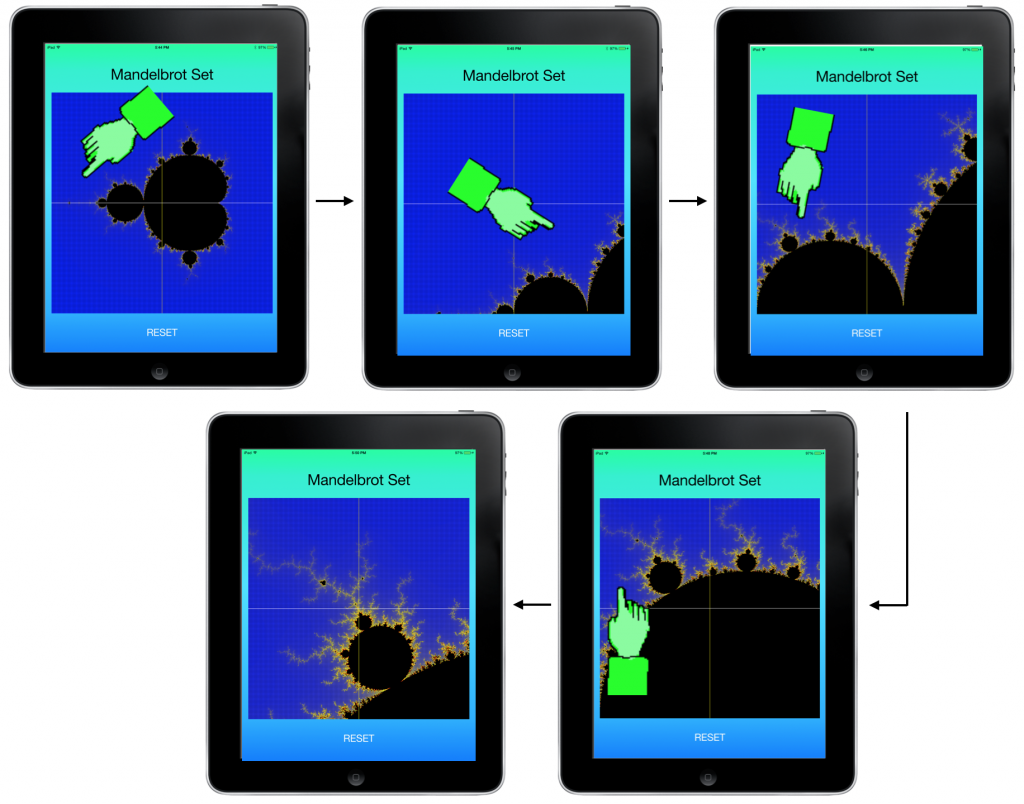

The displayed image is divided into 4 quadrants by the white lines. You select where you want to zoom in by tapping the appropriate block with your finger. For example, here is a sequence of touch events from the very beginning of the set:

Naming the blocks using 1 (top-left), 2 (top-right), 3 (bottom-left) and 4 (bottom-right), the journey above may be abbreviated thus: 1-4-3-1. The large image at the beginning of this article was obtained using the following sequence: 1-4-4-1-3-3. The possible journeys are infinite and one can probably spend several lifetimes exploring every nook and cranny.

UNDER THE HOOD

The overall design of this app follows the usual Model-View-Controller strategy. The model consists of the minimum and maximum (x, y) coordinates of the window in the complex plane, the number of divisions along x and y (resolution), a 2D array that holds the parameter value used to color the set and a method to determine whether a point belongs in the set or not. Here is the implementation of the Model class:

#import "Model.h"

@implementation Model

@synthesize xmin, xmax, ymin, ymax;

@synthesize nx, ny;

@synthesize MAX_ITER;

@synthesize iters;

// override superclass implementation of init

-(id) init

{

self = [super init];

if (self) {

nx = 500;

ny = 500;

xmin = -2.0;

xmax = 1.0;

ymin = -1.5;

ymax = 1.5;

MAX_ITER = 200;

// allocate 2D array

iters = [[NSMutableArray alloc] initWithCapacity:nx*ny];

// initialize the 2D "iters" array, which

// represents the number of iterations it takes for the

// point to escape from the set

for(int i = 0; i < nx; i++) {

for(int j = 0; j < ny; j++) {

[iters addObject:@(0)];

}

}

}

return self;

}

// this function checks if a point (x,y) is a member of the Mandelbrot set

// it returns the number of iterations it takes for this point to escape from the set

// if (x,y) is inside the set, it will not escape even after the maximum number of iterations

// and this function will take a long time to compute this and return the maximum iterations

- (int) Mandelbrot_Member_x:(double) x and_y: (double) y

{

double cx = x, cy = y;

double zx = 0.0, zy = 0.0;

int iter = 0;

while(iter < MAX_ITER)

{

iter++;

double real = zx*zx - zy*zy + cx;

double imag = 2.0*zx*zy + cy;

double mag = sqrt(real*real + imag*imag);

if (mag > 2.0) break; // (x,y) is outside the set, quick exit from this loop

zx = real;

zy = imag;

}

return iter;

}

// update the 2D array which stores "iterations to escape" based on the

// current window

- (void) updateMandelbrotData

{

double dx = (xmax - xmin)/nx; // grid spacing along X

double dy = (ymax - ymin)/ny; // grid spacing along Y

// update the 2D "iters" array, which

// represents the number of iterations it takes for the

// point to escape from the set

for(int i = 0; i < nx; i++) {

for(int j = 0; j < ny; j++) {

double x = xmin + dx/2 + i*dx; // actual x coordinate

double y = ymin + dy/2 + j*dy; // actual y coordinate

// calculate iterations to escape

int iterationsToEscape = [self Mandelbrot_Member_x: x and_y: y];

// natural index

int N = i + nx*j;

// add this entry to the 2D "iters" array

[iters removeObjectAtIndex:N];

[iters insertObject:@(iterationsToEscape) atIndex:N];

}

}

}

@end

There are two views, both subclasses of UIView: one displays the set itself and the other draws the horizontal and vertical centerlines. The implementation of the Class that draws the set is provided below for reference:

#import "DrawMandelbrot.h"

@implementation DrawMandelbrot

@synthesize nx, ny;

@synthesize MAX_ITER;

@synthesize data;

- (id)initWithFrame:(CGRect)frame

{

self = [super initWithFrame:frame];

if (self) {

// Initialization code

}

return self;

}

- (void) initData

{

data = [[NSMutableArray alloc] initWithCapacity:nx*ny];

for(int i = 0; i < nx; i++) {

for(int j = 0; j < ny; j++) {

[data addObject:@(0)];

}

}

}

- (void)drawRect:(CGRect)rect

{

// get the current context

CGContextRef context = UIGraphicsGetCurrentContext();

// context size in pixels

size_t WIDTH = CGBitmapContextGetWidth(context);

size_t HEIGHT = CGBitmapContextGetHeight(context);

// for retina display, 1 point = 2 pixels

// context size in screen points

float width = WIDTH/2.0;

float height = HEIGHT/2.0;

float dx = width/nx;

float dy = height/ny;

// assign color based on the number of iterations - Red Green Blue (RGB)`

for(int i = 0; i < nx; i++) {

for(int j = 0; j < ny; j++) {

int N = i + nx*j;

int VAL = [[data objectAtIndex:N] intValue];

UIColor* fillColor;

if(VAL==MAX_ITER)

{

fillColor = [UIColor colorWithRed:0.0 green:0.0 blue:0.0 alpha:1.0]; // black

}

else

{

// ratio of iterations required to escape

// the higher this value, the closer the point is to the set

float frac = (float) VAL / MAX_ITER;

if(frac <= 0.5)

{

// yellow to blue transition

fillColor = [UIColor colorWithRed:2*frac green:2*frac blue:1.0 - 2*frac alpha:1.0];

//glColor3f(2*frac,2*frac,1-2*frac);

}

else

{

// red to yellow transition

fillColor = [UIColor colorWithRed:1.0 green:2.0-2.0*frac blue:0.0 alpha:1.0];

//glColor3f(1,2-2*frac,0);

}

}

// draw colored rectangle

double x = i*dx; // screen x coordinate

double y = (ny-j-1)*dy; // screen y coordinate

CGRect rect = CGRectMake(x, y, dx, dy);

[fillColor setFill];

CGContextFillRect(context, rect);

}

}

}

@end

Note that we are using the Core Graphics API to render the 2D color data. This is not necessarily the best approach, but I believe it is the simplest to understand and implement. In the future, I plan to use OpenGL-ES and accelerate the calculation and graphics using GPU computing (CUDA/OpenCL).

Finally, the controller interprets the model for the views and updates the model data based on user touch-events. Essentially, we figure out which quadrant the user touched and update the minimum and maximum x and y coordinates appropriately, ask the Model to re-calculate the 2D array in the new window and send the updated data to the View for rendering. Here is the Controller implementation:

#import "ViewController.h"

#import "CrossHair.h"

#import "Model.h"

#import "DrawMandelbrot.h"

@interface ViewController ()

@property (strong, nonatomic) IBOutlet UIView *blackBox;

@property float width, height;

@property (strong, nonatomic) CrossHair* cross;

@property (strong, nonatomic) Model* model;

@property (strong, nonatomic) DrawMandelbrot* drawSet;

- (IBAction)backToSquareOne:(id)sender;

@end

@implementation ViewController

@synthesize blackBox;

@synthesize width, height;

@synthesize cross;

@synthesize model;

@synthesize drawSet;

- (void)viewDidLoad

{

[super viewDidLoad];

// Do any additional setup after loading the view, typically from a nib.

// black box view dimensions

width = blackBox.frame.size.width;

height = blackBox.frame.size.height;

// initialize the model

model = [[Model alloc] init];

// get window size

CGRect viewRect = CGRectMake(0, 0, width , height);

// initialize the Mandelbrot view

drawSet = [[DrawMandelbrot alloc] initWithFrame:viewRect];

drawSet.nx = model.nx;

drawSet.ny = model.ny;

drawSet.MAX_ITER = model.MAX_ITER;

[drawSet initData];

// initialize cross view

cross = [[CrossHair alloc] initWithFrame:viewRect];

[cross setBackgroundColor:[UIColor clearColor]];

// add subviews

[blackBox addSubview:drawSet];

[blackBox addSubview:cross];

// draw the Mandelbrot set

[self drawMandelbrotSet];

}

- (void)didReceiveMemoryWarning

{

[super didReceiveMemoryWarning];

// Dispose of any resources that can be recreated.

}

// touch events determine where we need to zoom in

// +------+------+

// | | |

// | 1 | 2 |

// | | |

// +------+------+

// | | |

// | 3 | 4 |

// | | |

// +------+------+

- (void) touchesBegan:(NSSet *)touches withEvent:(UIEvent *)event

{

for (UITouch* t in touches) {

CGPoint touchLocation;

touchLocation = [t locationInView:blackBox];

float x = touchLocation.x;

float y = touchLocation.y;

float dx = width / 2;

float dy = height / 2;

// find out array location where finger touches the screen

int xIndex = x/dx;

int yIndex = y/dy;

int N = (xIndex + 2*yIndex) + 1;

NSLog(@"You selected block %d", N);

switch (N) {

case 1:

model.xmax = model.xmin + (model.xmax - model.xmin)/2;

model.ymin = model.ymin + (model.ymax - model.ymin)/2;

break;

case 2:

model.xmin = model.xmin + (model.xmax - model.xmin)/2;

model.ymin = model.ymin + (model.ymax - model.ymin)/2;

break;

case 3:

model.xmax = model.xmin + (model.xmax - model.xmin)/2;

model.ymax = model.ymin + (model.ymax - model.ymin)/2;

break;

case 4:

model.xmin = model.xmin + (model.xmax - model.xmin)/2;

model.ymax = model.ymin + (model.ymax - model.ymin)/2;

break;

default:

break;

}

[self drawMandelbrotSet];

}

}

- (void) drawMandelbrotSet

{

[model updateMandelbrotData];

drawSet.data = model.iters;

[drawSet setNeedsDisplay];

}

- (IBAction)backToSquareOne:(id)sender {

model.xmin = -2.0;

model.xmax = 1.0;

model.ymin = -1.5;

model.ymax = 1.5;

[self drawMandelbrotSet];

}

@end

Notice we have a RESET button to go back to the original window. This merely resets the minimum and maximum x and y limits to their original values.

The entire source code can be cloned from

https://github.com/jabhiji/ios-mandelbrot.git

Happy Xcoding!